AI Assistants in Action

A Field Report on the Future of Corporate Learning

For the past year, we have been field-testing AI Assistants with learners. The experience has shaped how I think about learning, about AI, and about how the two can actually work together.

We called them coaches at first—wise sages you could glance over at from the field, the stage, or the desk. Early iterations had a cast of characters—a Moderator, an Expert, and an Improv—each trying to assist in their own way. There were many failures and even more unexpected outcomes. One manager spent considerable time attempting to jailbreak the AI. Another recruited it as a cohort for an office mischief campaign. Others struggled with basic questions—how to prompt, where to start, what to even ask. Another reimagined an office improv exercise as something closer to Orwell’s Animal Farm. Plenty of conversations started strong and died on the vine.

But then there were the dialectic moments, ones that delivered on their intention. When those landed, the engagement was unmistakable. Learning transferred.

Corporate training, especially digital, is haunted by a single, persistent failure: it doesn’t stick. Knowledge is gained in the classroom, but wisdom is forged in the application. The trend has been to break difficult content into bite-sized training. Atomic-level learning. The kind that lets you run a marathon a hundred yards at a time, replete with a certificate of completion.

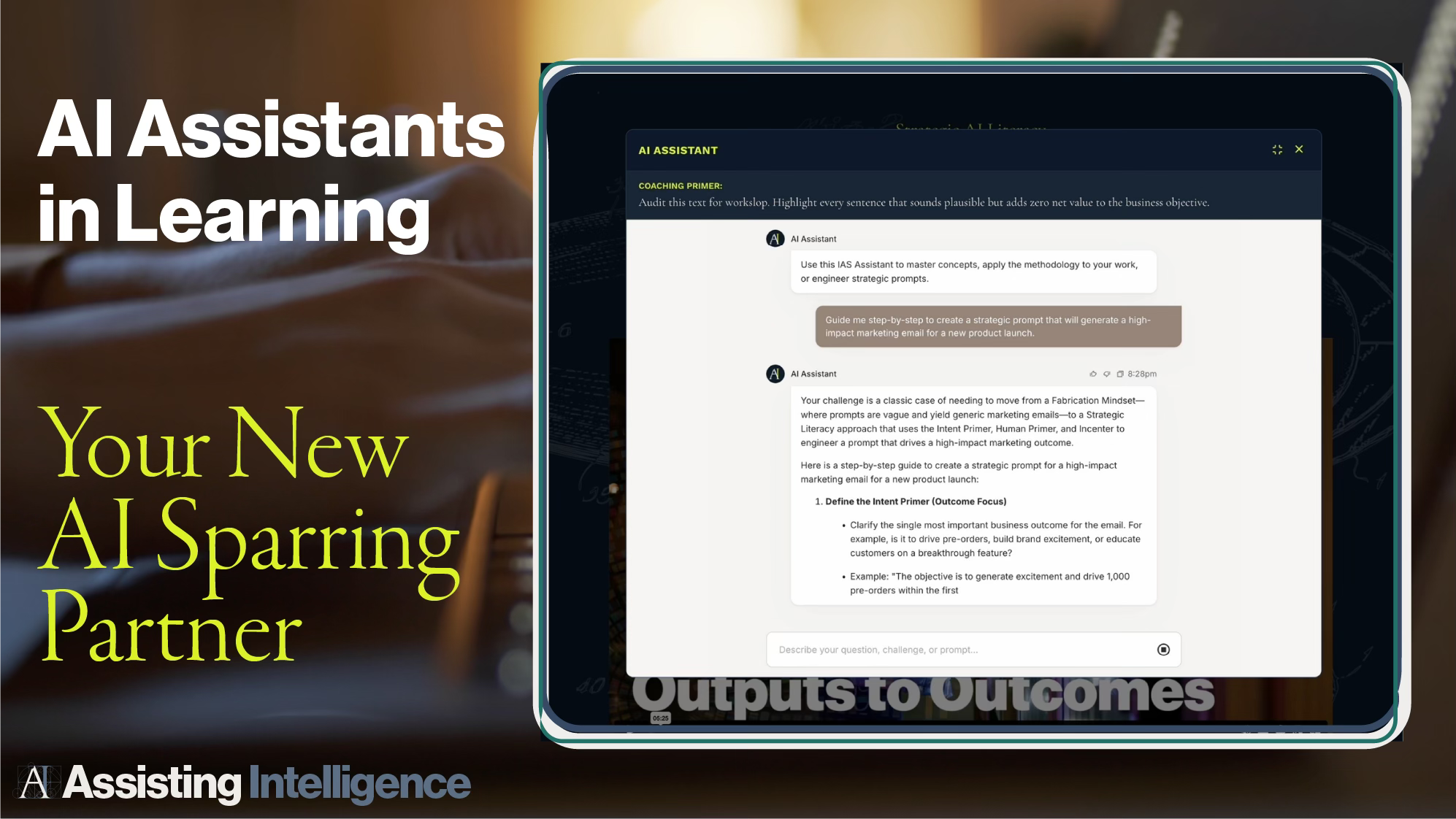

Last summer, we gave a new AI Assistant to dozens of front-line managers. This AI coach wasn’t a repository of information. It was a dialectic partner—a sparring partner for the real challenges waiting back at the desk. What it showed us provides a framework—validated in the field—for the future of learning.

From Theory to Action: AI as a Performance Bridge

The core challenge for any manager is translating theory into action. Traditional learning provides the models and the scripted role-plays, but managers return to their desks to face problems that don’t follow a script. This is the moment where learning is either applied or put on a shelf and forgotten.

This is where the AI Assistant stepped in. A confidential sparring partner, available at 9 PM on a Tuesday when the conversation is scheduled for Wednesday morning. Instead of providing a simple output, it created an outcome. The assistant didn’t give them a script. It walked them through the framework it was trained on, applied to their specific situation, and together they built a plan.

Two things happened. Managers got a safe space to rehearse. And they walked into those conversations with a plan.

Adjusting Focus: Developing the Organizational Picture

Traditionally, the ROI of training is a blurry snapshot measured through assessments, surveys, and anecdotes. These AI agents sharpened the picture.

By analyzing the anonymized transcripts of manager interactions, stakeholders can see exactly where their people are struggling. We didn’t read anyone’s conversations. We ran the anonymized data through AI analysis designed to surface patterns, not details. What we expected was a spread—delegation, time management, goal planning. What we found was one thing: giving constructive feedback. The single biggest hurdle was confidence in difficult conversations.

That’s not just a finding. That’s the picture coming into focus. Instead of guessing where to invest, you can see exactly where your people need help and act on it.

The Agent vs. The Oracle: Why Not Just Use a General AI?

Here’s the question I always get: why not just use a GenAI? A general AI can certainly provide an answer. But it fails in three ways that matter.

Framework, Not Just Answers: A GenAI provides a plausible answer to one problem. This agent provides a consistent, repeatable framework that embeds a proprietary methodology directly into their management toolkit.

Consistency at Scale: If fifty managers ask a GenAI for advice, they will get fifty different, inconsistent, and potentially off-brand answers. An engineered assistant is a single source of truth; your truth. All fifty managers receive coaching using the same models, the same voice, and the same framework. Without this, brand and strategy are blunted.

The Lost Insight: This is the most significant failure. When managers use a GenAI, the organization learns nothing. By using a secure, internal agent, the anonymized transcripts create a rich dataset for analysis. An organization that outsources these conversations to a GenAI is choosing to be blind.

The Great Misdirection: AI in the Wrong Hands

Over the past year, these assistants revealed a fundamental flaw in how the corporate learning industry is approaching AI. The dominant trend is handing AI tools to L&D teams so they can crank out more content. More personalized. Faster.

This is a strategic error. It repeats the mistakes of the past decade—from gamification to micro-learning to the endless quest to “TikTok-ify” training. These trends share a common failure: they make learning smaller, shinier, and more bite-sized—easier to consume, but easier to ignore. They serve the producer, not the learner.

The real revolution is not using AI to create more content. It’s putting a carefully engineered AI into the hands of the learner. When you do, three things happen.

The Learner Gets Better, Faster: They control the engagement. They frame the need, the timing, the question. The assistant meets them where they are.

The Learner has a Shared Experience: If all learning content is hyper-personalized, the shared language is lost. People can’t coach each other, can’t reference common frameworks, can’t build on a shared foundation. Learning must exist as a shared experience. AI Assistants are tethered to that framework. They operate within it, not around it.

The Organization Gets Smarter: This is the insight most people miss. By analyzing aggregated, anonymized conversations, the organization gains a live view of what’s actually hard for its people.

The current industry approach—the AI-powered content factory—forfeits all three. It is the difference between building an AI that writes course outlines and building an AI that listens to your people and tells you what they actually need to learn.

The Real Work of AI in Learning

What I’ve learned over the past year is that building these AI Assistants takes considerable human effort, thought, and direction. Gathering the knowledge canon. Building the constraints, the prompts, the guidance and experience into the system. Testing. Training. Searching for insight flowing to the learner and back to the organization through reporting.

Doesn’t this intuitively sound about right? It’s what we’ve known about learning all along. So when someone says they can create a course in three clicks, ask yourself what’s actually being created. We’re not doing alchemy here. We’re mining insight and delivering it to the point of need.

AI can be an active participant in this process, but at no point can it do the thinking or make it easy. If you hand over learning to an AI, you’re commoditizing your company’s IP.

The future of corporate learning is not a content strategy. It’s a confiding and assuring conversation. These assistants show that AI’s most profound application is not as a content producer, but as a dialectic sparring partner. A tool that paradoxically gives the learner more control of learning than they have had in decades, perhaps millennia*. A tool that creates a more human space for listening and response. It restores something corporate learning has largely lost.

This is the optimistic outcome for AI and learning. And for learners.